New technologies are providing opportunities for fast data collection. This post shows how, by using web-scraping tools in Python, we were able to collect, structure, visualise and analyse the occupation and skills demand of more than 6 000 online job vacancies (OJVs) published in the most popular job portal in North Macedonia.

Collecting data from web portals

In this example, I used web scraping techniques and tools (Python) to download online job vacancies and the content from the most popular online job vacancy (OJV) portal in North Macedonia- www.najdirabota.com.mk. Overall, over 800 web pages were downloaded from the website, ranging between August 2018 and August 2019.

After downloading the web content, raw unstructured data was obtained, which needed to be cleaned and pre-processed for further analysis. In this step, special characters (^, [, \t, ], +, |, [, \t, ], +, $) and private data, such as employer addresses and phone numbers, were removed.

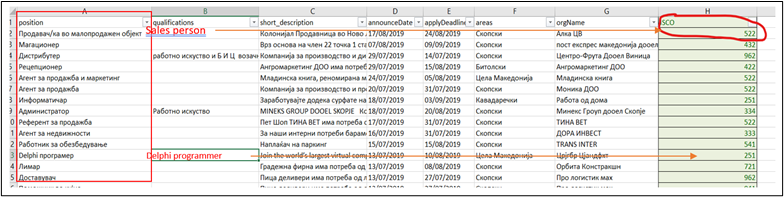

The next step was to identify the data column containing occupational names and to compare and match it to the ISCO classification library on a 3-digit level, as shown below.

Source: print screen from Excel

My initial expectations at this stage were to obtain country regional data in order to assess skills needs at the regional and local levels. However, in the pre-processing phase, I found that there were too many missing fields in the cells assigned to job location and that some vacancies had more than one job location entry. I therefore skipped this functionality in the hope of finding better-structured data elsewhere.

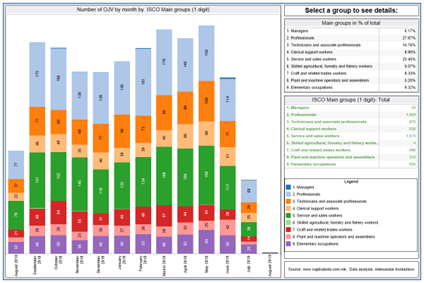

After aggregating the results on ISCO 3-digit level, it was easy to analyse the data on various levels, from 1-digit (Main ISCO groups), 2-digit (Submajor groups) to 3-digit ISCO groups. Publishing data vacancies also helped me to reconstruct monthly demand by occupation groups. For the visualisation of the results, I used the data visualisation tool Tableau, which allowed me to publish the results on a free server and create interactive dashboards for data presentation (see below).

Note: The print screen can be seen here: https://public.tableau.com/profile/aleksandarkostadinov#!/vizhome/Tableau_15891784046170/ISCO

In order to check and compare the analysis of the results obtained from the web-scraped data, I compared the statistical results with the results of the State Statical Office - Job Vacancies report. The period chosen for the SSO’s survey data was July 2018 to June 2019, which is the closest comparable period to that covered by the web-scraped data (15 August 2018 to 15 August 2019). As expected, most of the discrepancies in the statistics are among “Professionals”, meaning that web-based demand is much higher for Professionals than the one reported in the Job vacancies survey from the State Statistical Office.

On the other hand, elementary occupations and agricultural occupations are underrepresented because it is less likely that employers would find those types of workers on web portals and use other channels for recruitment.

Analysing skills demand

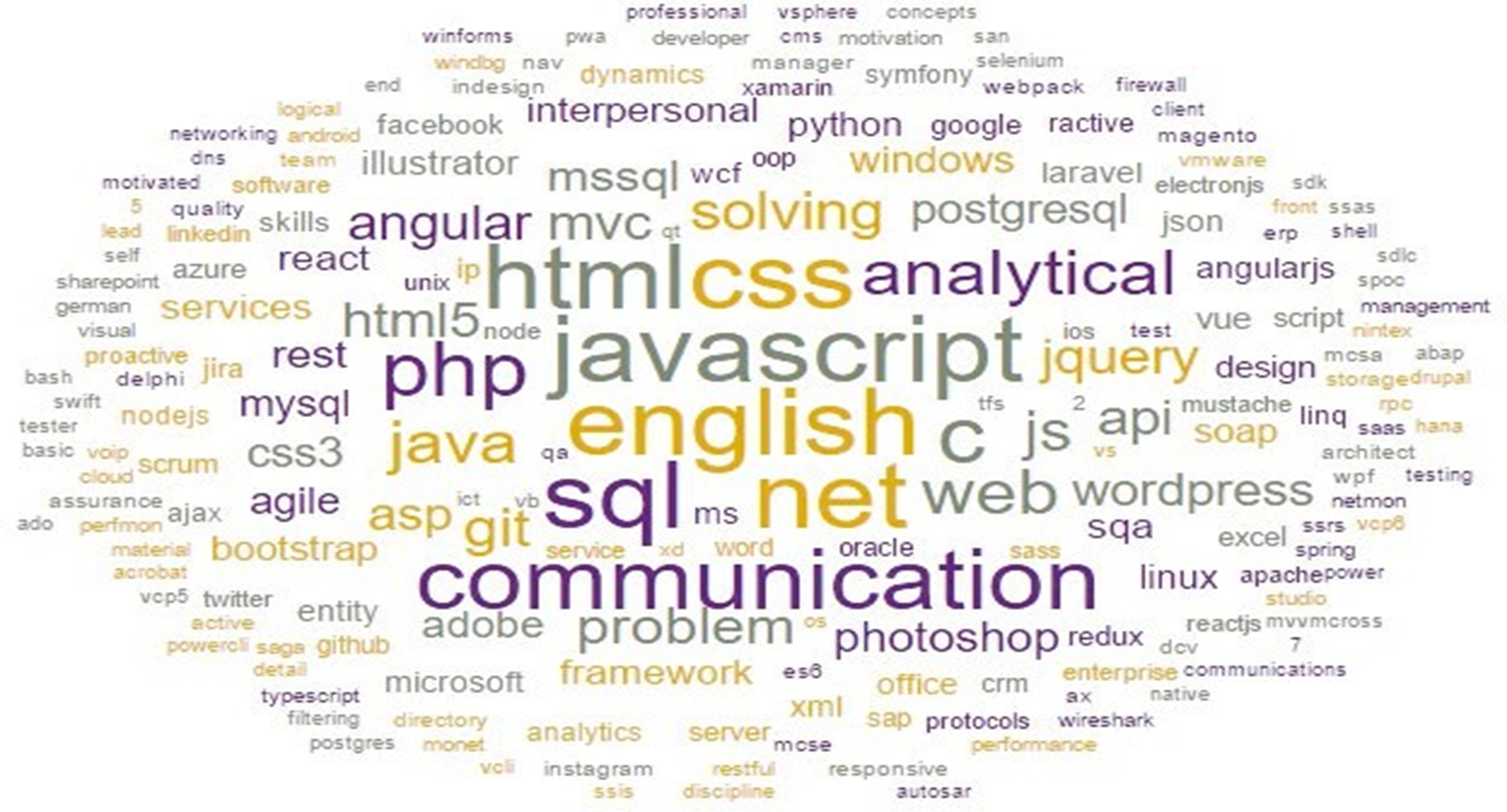

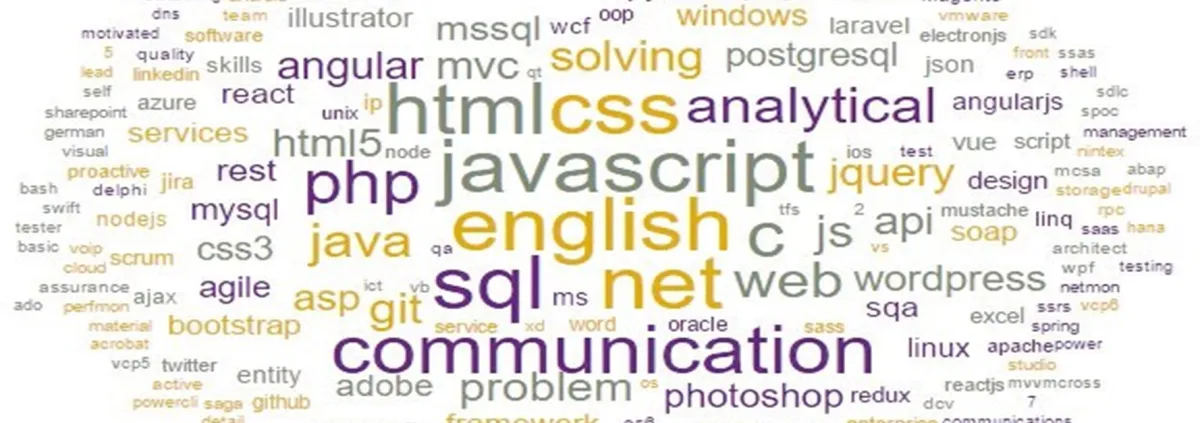

Another use of obtained data was to analyse concrete ISCO occupational group 251- Software and applications developers and analysts. After collecting and preprocessing the job description fields of the related ISCO group, I used the Machine Learning tool Orange3 to perform textual analysis and to obtain a Word cloud showing the skills and qualifications most frequently demanded by employers looking for Software and Applications Developers.

Real-time online job monitoring

The most important benefit of this methodology is the ability to monitor real-time data from online job vacancy portals. This can then be used to develop policy guidance regarding job demand trends. Based on data of advertised vacancies, we can analyse the daily volume of job vacancies by occupation and location, amongst other possibilities.

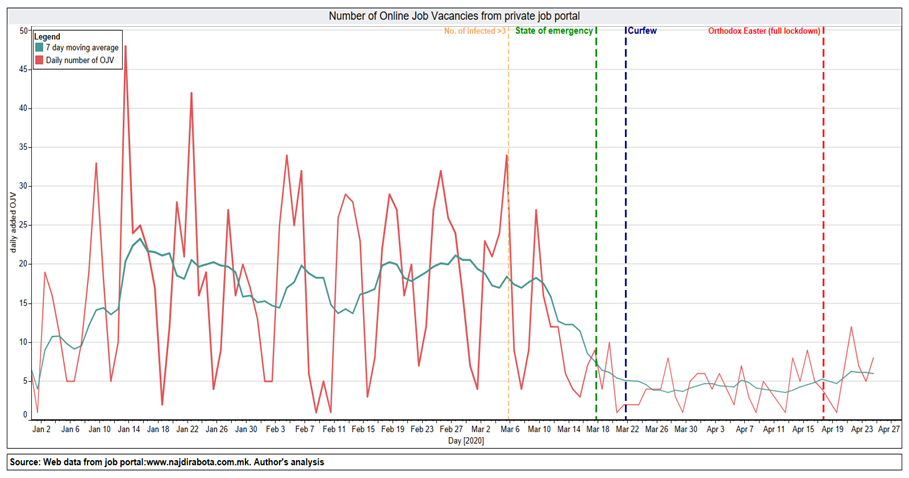

This discovery was really helpful, and its potential were tested during the COVID-19 lockdowns in North Macedonia. As presented in the chart below, the number of daily vacancies had risen by mid-January 2020, reaching nearly 23 new job vacancies per day (on a 7-day average). The rising trend continued until the beginning of March 2020, at which point there was an increase in the cases of COVID-19 detected in the country. A sharp decline in OJVs as of early April is clearly visible.

In the period after declaring a ‘state of emergency’ and introducing a police curfew, the number of new OJVs further decreased to a rate of between one and five new OJVs per day. Rumours about relaxing lockdown measures after the Orthodox Easter had a positive impact on employer’s expectations, with a short upward trend showing more OJVs posted towards the end of April 2020.

Number of daily and weekly OJVs on the job portal, 1 January–25 April 2022

Source: http://www.najdirabota.com.mk/. Author’s calculations.

Future considerations

This blog post offers some insight into new research methods and approaches on how to apply and use web scraping methods to analyse online job vacancies. It is important however to bear in mind the legal risks which may arise from web scraping, as web portal owners do not always welcome and tolerate this activity.

The use of online platforms and social platforms has become increasingly popular during the Covid-19 and post-covid period. This trend is expected to continue, with more employers embracing the convenience and accessibility of online job portals.

As digitalisation continues to grow at a faster pace, there is a significant increase in demand for digital skills as well. Thanks to this methodology, policymakers can assess and estimate this demand by occupation and location and offer educational and training courses which can meet the demand for such skills. Current traditional methods for collecting skills needs and occupations needs are inefficient, expensive and not inclusive.

Nonetheless, despite the many advantages of web scraping methods, there remain some challenges and potential setbacks:

- The quality and consistency of data entered on the web portal;

- A lack of comparable classifications and methodologies;

- Geographical representation;

- Double input of jobs;

- Low levels of elementary, agriculture and administrative job occupations, as these are usually hired through different channels.

These challenges are addressed in the full paper with Python code and can be accessed here :

European Training Foundation, Bardak, U., Rosso, F., Fetsi, Changing skills for a changing world – Understanding skills demand in EU neighbouring countries, Publications Office, 2021

Please log in or sign up to comment.